YES, there are organizations that work with zero error. Or at least, do not consider an option, to have a single error (although sometimes it happens). These are called High Reliability Organizations (HRO).

When we see that some industries have a thousand times less errors than the health industry, we can learn something from their methods.

The most renowned of the HROs are the nuclear plants, aircraft carriers, missile facilities, semiconductor factories, air traffic controllers and the aviation industry in general.

They are organizations where there is no acceptable calculation of errors. They work with the criteria of never events. That is, under no circumstances such events can happen.

There are many reasons that explain this result. Level of investment, it means that it is invested whatever is necessary to have zero error. Economic penalty for errors. In health, many events do not economically penalize the institution and even come to benefit it, as with extended permanence. Degree of automation of operations. Training and qualification. Systemic safety culture, which we will see below.

During 2015 the aviation industry made 29 million flights with 16 accidents.

That is, 0.55 accidents per million flights.

In health, we have 10% and 50% of it could be avoid. This indicator is given by the World Health Organization (WHO) and many others and considering that it may be the triple.

Ten percent of 1 million is 100.000 and if we take out the non-avoidable, we reach 50,000.

That is, 0.55 versus 50,000, or 90,000 times bigger.

If we make a comparison, with the number of deaths, the relationship is much worse.

After visiting hospitals in more than 10 countries, I find a great disparity in their level of safety.

- In many countries, there is still no talk about patient safety.

- We are still fighting to take out the potassium chloride from the nursey and leave it only at the pharmacy. A simple task becomes something heroic.

- We have many identical packages.

- We do not use a basic technology such as bar code.

- There is a 30% of waste, which prevents from investing in automation.

- There´s no senior management of Quality.

- It is applied the guilty policy of health personnel.

- There is a gradient of authority among people working in health, which difficult comments.

- Governments even buy expensive equipment’s, but they do not invest in health aspect of the population, such as potable water and sewage.

- Low investment is made in prevention and general health education, to reduce the expenses of correcting the caused problems.

- Other industries do not have the obligation to help. For example: laboratories, food and education industries.

- Etc.

It means that we do have a lot to do in health system and the first change we must do is Attitude.

An organization is Reliable when facing an abnormal fluctuation of internal and external conditions of the system, it keeps the results within the desirable.

Having desirable results when input parameters are controlled is not enough.

About the change in Attitude, there is a growing concern about the incidence of cognitive processes on results. We speak about the technique of mindfulness.

Mindfulness refers to the quality of our attention, rather than to keep the attention.

Therefore, the knowledge or perception of the context is very important.

The repertoire of HRO criteria’s that we can be applied is summarized in:

- Worry about failures.

There is a consciousness that failures can occur. To err is human and it is almost a natural condition that we can fail. Automatic systems with their algorithms do not interpret all situations of reality and handle just the logical situations that may occur. The incidence of several simultaneous factors leads to unplanned results. HROs stop at the study of minor errors or near misses, with the same determination as if they were major faults. The management is oriented to study the failures, as much as to maintain the productivity.

- Reluctance in simplifying interpretations.

In a complex system, simplification is a methodological error. In no way can we give a simple or simplistic answer to a problem occurred in a complex system. We must consider the limitations imposed by the context: our mental system, the limitations of the physical structure, the limitations of logical thinking, which parts of the totality we are not seeing and avoiding the disregard of intuitions.

- Sensitivity to Operations.

Having the buble is a term used in navy to define when a commander has the perception of the integrality of the elements of the complex reality of the environment, together with the operational dynamics. Realize the whole situation through integrated instruments with human action. Be aware of possible, misinterpretations, near misses, system overloads, distractions, surprises, confusing signals, interactions, and so on.

- Commitment to Resilience.

It refers to an anticipation of possible problems, even with some simultaneity and training to solve the situation, when they come. Resilience here refers to overcoming the surprises that some incidents may produce. Be prepared for the error. Accept the inevitability of error and prepare for it.

- More horizontal hierarchical structures.

It is studied that in institutions with strong hierarchical definition, errors spread faster helped by organizational efficiency. It happens most of the times when the incident occurs at high organizational levels, rather than at the bottom. Having an organization that works more in consensus helps preventing these problems. It has even been defined that the term of organized anarchy as a mean of control. The responsibility for solving an incident falls more with the experts on that subject, than with the hierarchical leaders. The fact of being present at the time of the event also determines who can take decisions. The weakness of the hierarchical structure is a desired characteristic.

An organization is Reliable when facing an abnormal fluctuation of internal and external conditions of the system, it keeps the results within the desirable.

Having desirable results when the input parameters are controlled is not enough. The HRT or High Reliable Theory, (Charles Perrow) defines that it is not the stability of the inputs that will give us the solution to a stability of the results.

We must also mention that our vision of safety is what James Reason explains in his book The Human Contribution.

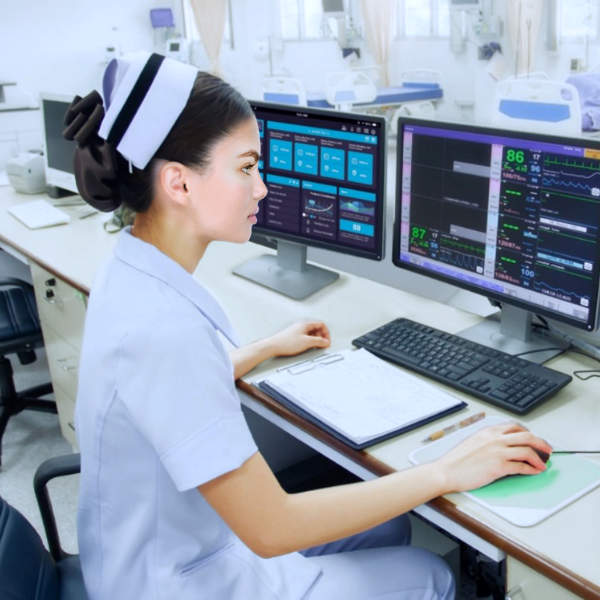

Our preferred model is to have two systems working simultaneously: the computer system, which through predefined algorithms automatically manages all operations and human control, following step by step events to correct and improve safety.